13. Point Pattern Analysis: Clusters#

Topics:

Mapping point clusters

Clustering points

13.1. Point Randomness#

Characterizing the overall point pattern (randomness):

Random

Uniform (Dispersed)

Clustered

13.2. Locate Clusters#

Knowing that a point distribution is clustered does not necessarily give information about where the clusters reside.

Mapping clusters:

Calculate spatial variation of point density.

Useful if points are samples.

Clustering points:

Identify specific points that are close to each other.

Useful if points are the “population.”

13.3. Mapping Clusters (Kernel Estimation)#

Areas of high point density indicate clusters.

Naïve point density mapping:

Use a regular grid in space.

Calculate the density of points within a circular neighborhood at each grid center.

\(Density = Number\ of\ points / \pi d^2\)

13.4. Kernel Density Estimation (KDE)#

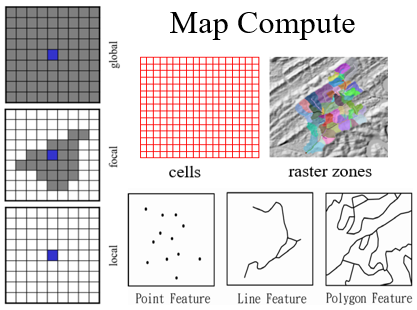

A focal operation where weights are defined by a continuous kernel function.

The weight is highest at the center (focus) and decreases to zero at the neighborhood boundary (bandwidth).

Provides a smoother surface than naïve density mapping.

13.5. Issues with KDE#

Choice of bandwidth (radius) significantly impacts the result.

Small bandwidth: Shows local variation and noise.

Large bandwidth: Shows broad trends but hides local clusters.

Choice of kernel function (e.g., Quartic, Gaussian) has less impact than bandwidth.

13.6. Clustering Points#

Grouping points into categories based on spatial proximity.

Goal: Find groups of points that are “closer” to each other than to points outside the group.

13.7. K-Means Clustering#

Partitions \(n\) points into \(k\) clusters.

Procedure:

Randomly select \(k\) points as initial cluster centers (centroids).

Assign each point to the nearest centroid.

Re-calculate the centroids of the new clusters.

Repeat steps 2 and 3 until centroids no longer move significantly.

Issue: You must specify the number of clusters (\(k\)) in advance.

13.8. Density-Based Spatial Clustering (DBSCAN)#

Classifies points into three categories:

Cores: Points inside a cluster with at least \(m-1\) points within distance \(r\).

Borders: Points inside a cluster but with fewer than \(m-1\) points within distance \(r\).

Noise: Points not belonging to any cluster.

Does not require specifying the number of clusters in advance.

Can identify clusters of arbitrary shapes.

13.9. DBSCAN in the Map Compute Framework (MCF)#

Viewed as a series of Neighborhood Relation and Graph operations.

Step 1: NbrGraph (Neighborhood relation operation)

Create a distance-based neighborhood (\(r\)).

Form a graph where points are nodes and proximity forms links.

Step 2: Graph Analysis

Identify sub-graphs (connected components).

Classify sub-graphs based on the number of points (\(m\)).

Step 3: Node Classification

Nodes in small sub-graphs (\(< m\)) are noise.

Nodes in large sub-graphs (\(\ge m\)) are core or border based on their degree (number of links).

13.10. Summary: Mapping vs. Clustering#

Mapping (KDE) results in a continuous surface (raster) showing the intensity of events.

Clustering (DBSCAN, K-Means) results in categorical assignments (attributes) for the original point features.

Choice of method depends on whether you want to visualize a “heat map” or identify distinct groups for further analysis.